The following letter is adapted from a two-part presentation to the Upper School delivered in Brauer Auditorium on February 24 and March 10.

On Monday, February 23, approximately $640 billion of S&P 500 market capitalization was erased in large measure by a viral Substack post, The 2028 Global Intelligence Crisis, whose authors projected an economically devastated near-term future. Imagining the coming two years as recent history, they “recalled” in June 2028 the rapid emergence since our present day of “a negative feedback loop with no natural brake” in which “AI capabilities improved, companies needed fewer workers, white collar layoffs increased, displaced workers spent less, margin pressure pushed firms to invest more in AI, AI capabilities improved,” and so on in destructive repetition. They called it “the human intelligence displacement spiral.”

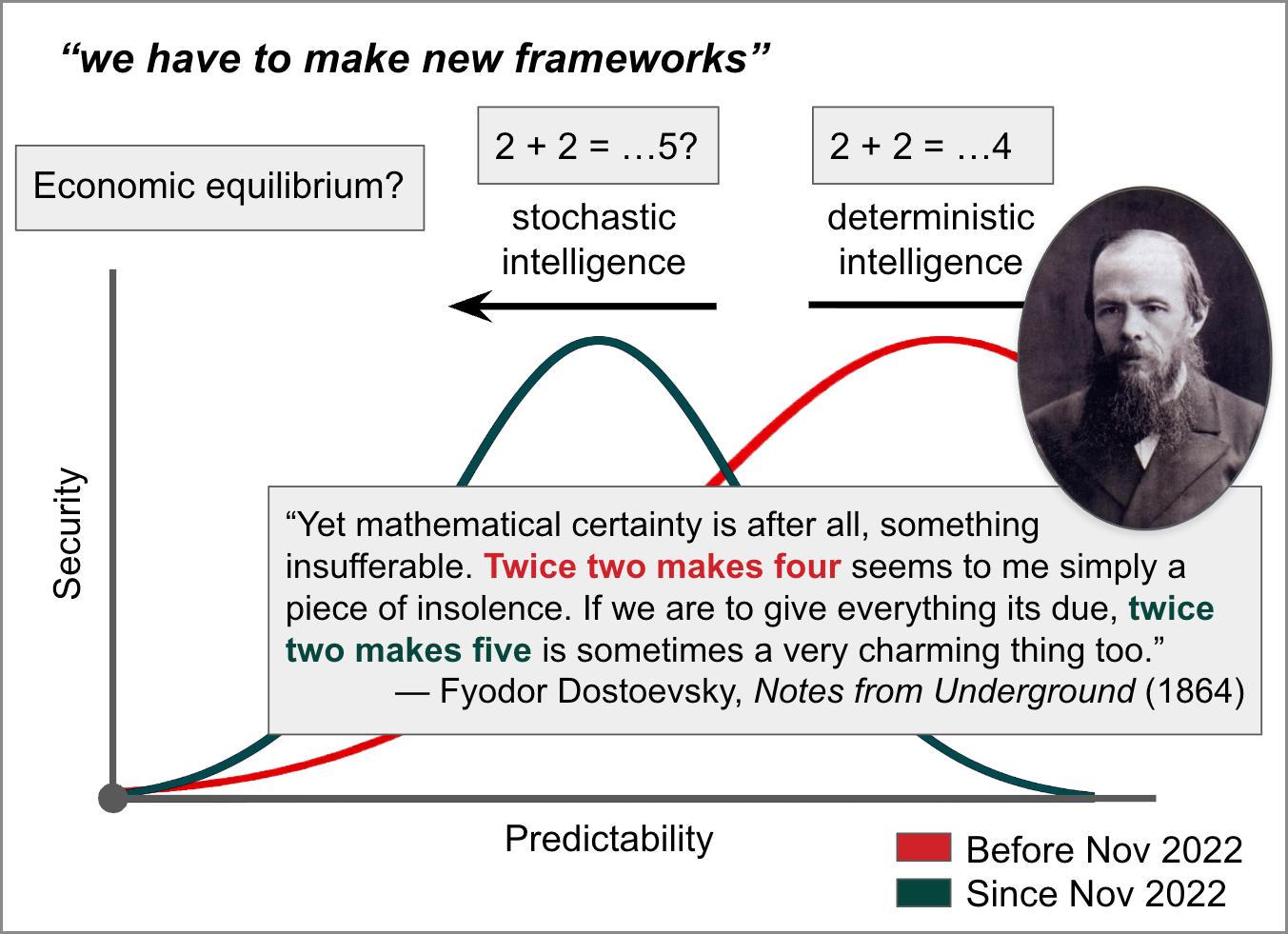

“For the entirety of modern economic history,” the authors contended, “human intelligence derived its inherent premium from its scarcity. We are now experiencing the unwind of that premium. Machine intelligence is now a competent and rapidly improving substitute for human intelligence across a growing range of tasks. The financial system, optimized over decades for a world of scarce human minds, is repricing. It is painful, disorderly, and far from complete. Nobody’s framework fits, because none were designed for a world where the scarce input became abundant. So we have to make new frameworks.”

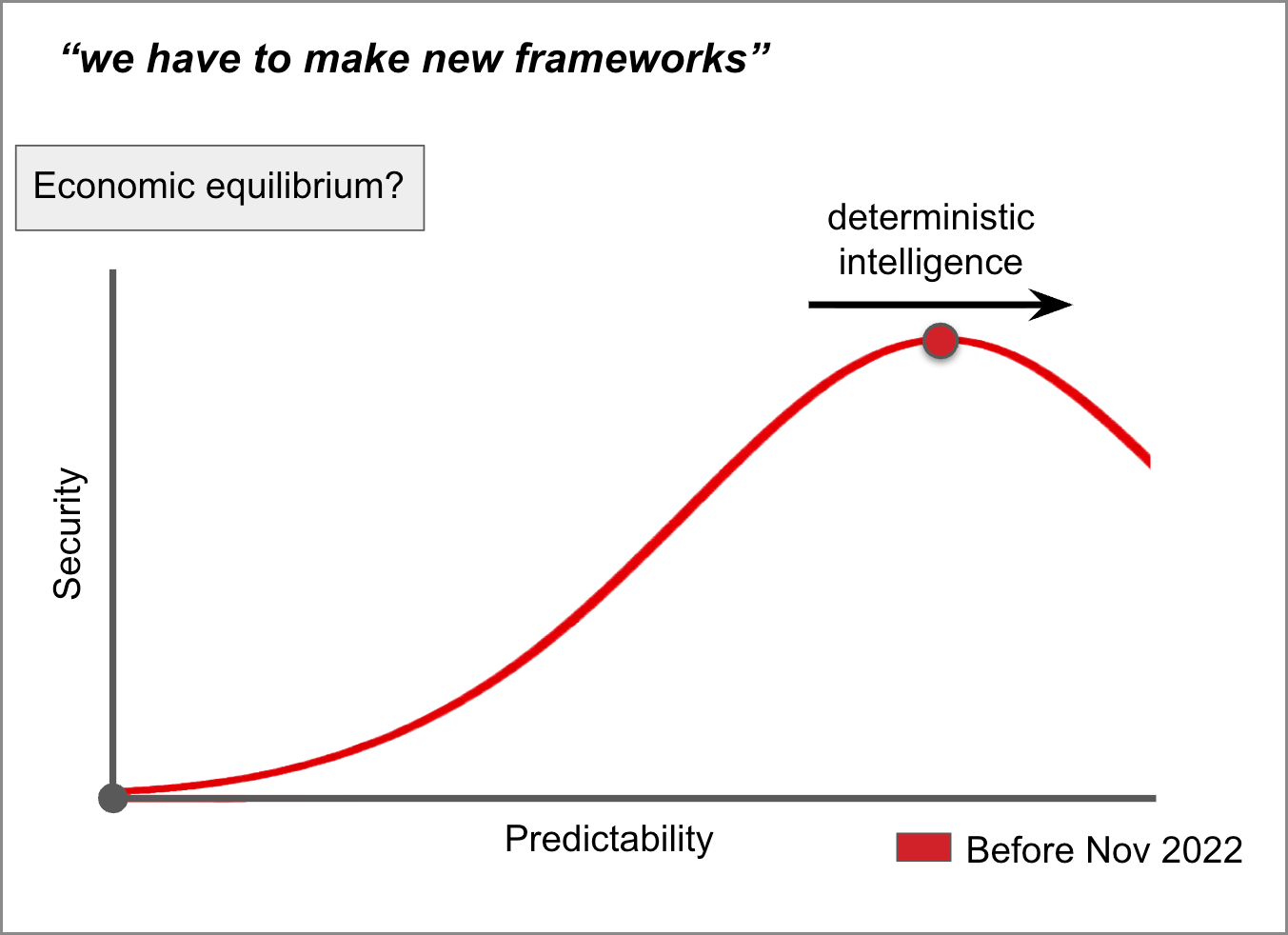

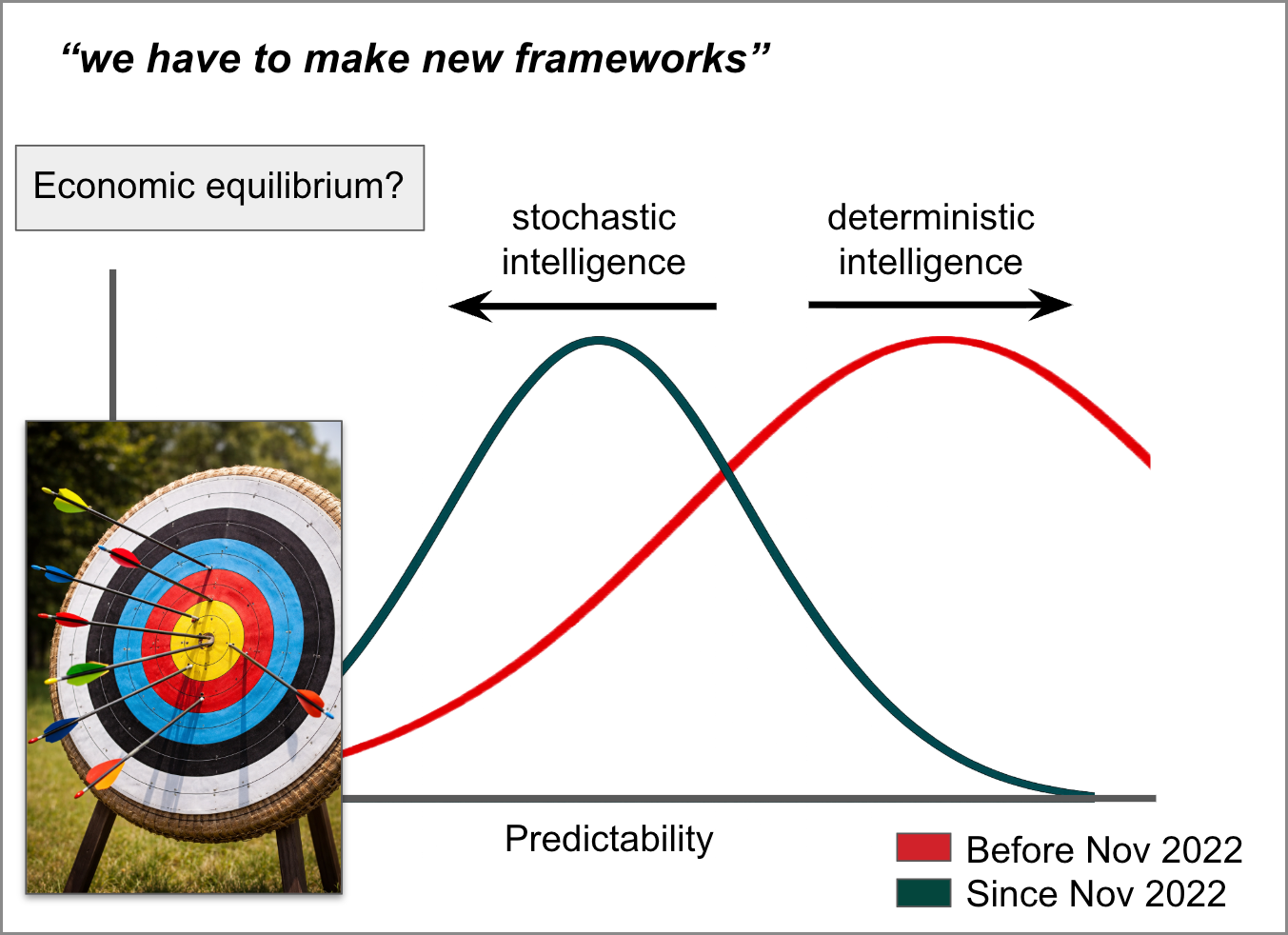

One such new framework might proceed from the assumption that economic security is a function of predictability and that, prior to OpenAI’s release of ChatGPT in November 2022 and the subsequent proliferation of large language model (LLM) AI technologies, human beings in educational and professional settings maximized their security principally through behaviors correlated with predictability—memorization, repetition, conscientiousness, consistency, and conformity, for example.

Since November 2022, however, greater educational and professional security may obtain in complementing these deterministic behaviors with others less correlated with predictability—creativity, spontaneity, risk tolerance, trial and error, and ingenuity, for example. The word “stochastic,” which computer scientists use to describe the introduction of randomness into LLM AI processes, derives from the Ancient Greek word for an archery target. In a perfectly deterministic system, the flight path of the arrow never changes. In a hybrid deterministic and stochastic system, the flight path of the arrow is generally true to the target but varies with each shot.

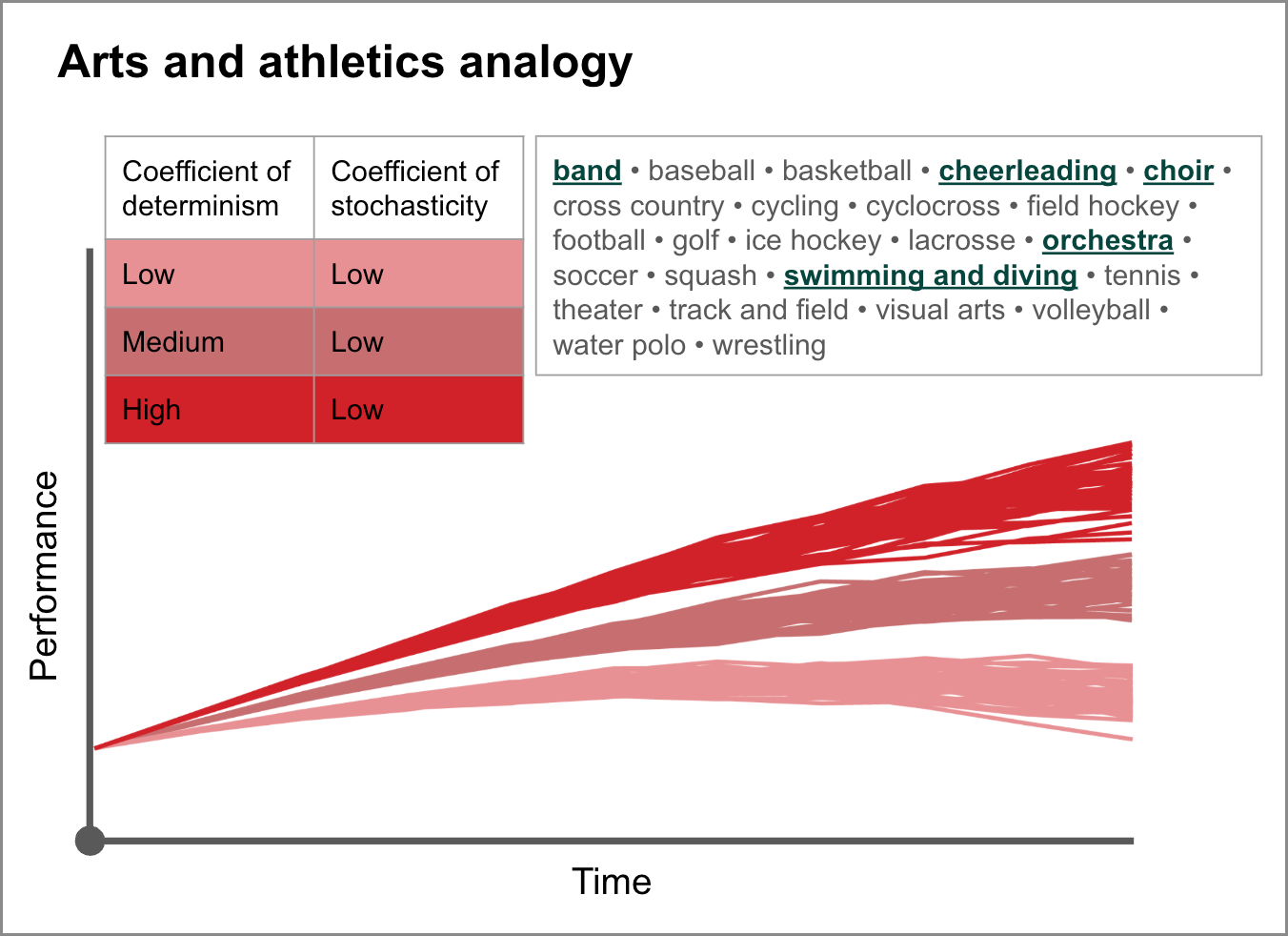

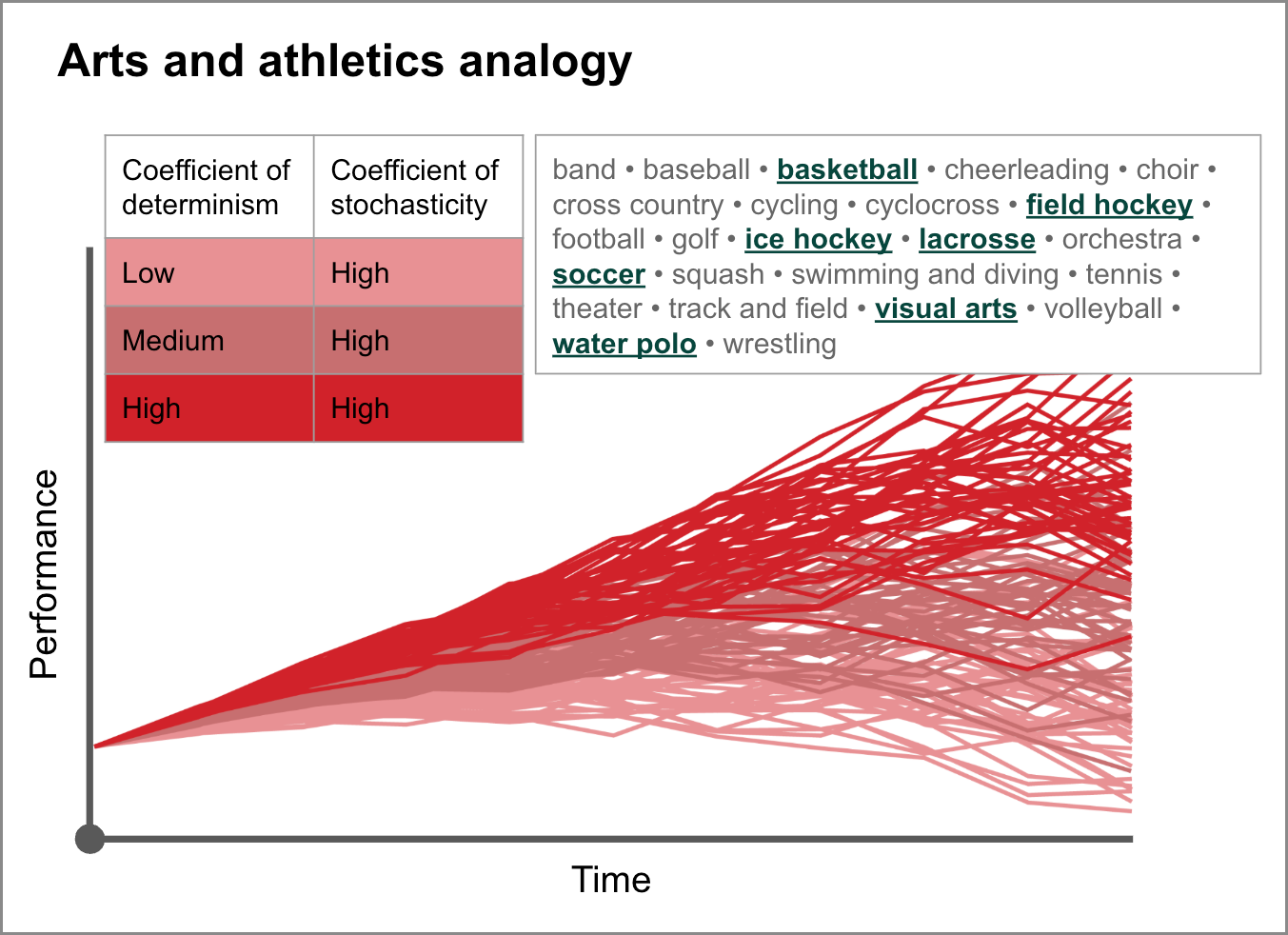

Our arts and athletics offerings at MICDS, like archery, are similarly analogous to the design of LLM technologies. Some are much more deterministic than stochastic—more predictable than random—and performance is therefore highly correlated with behaviors like consistency and repetition. Other programs, however, are significantly stochastic. After the ball is inbounded in a basketball or soccer game, for instance, the events that follow, with so much opportunity for variety, are nearly impossible to predict; and the production of visual art—painting a blank canvas, say, or shaping an undefined lump of clay—is perhaps least predictable of all. How wonderful that so many different kinds of artistic and athletic experiences, each requiring in its own balance of deterministic and stochastic intelligences, are available to our students in anticipation of their “real-world” lives.

In imagining a “new framework” of human endeavor in the face of LLM AI technologies, the point is not to abandon the cultivation of deterministic intelligences in favor of stochastic ones, any more than we would want to abandon those of our MICDS program offerings that are more deterministic than stochastic in nature. The fact that computers are far better calculators and memorizers than we could ever be should not suggest that we give up calculating and memorizing. Calculating and memorizing are inherently valuable skills. The rise of LLM AI should only suggest that these deterministic intelligences have become insufficient in and of themselves—insufficient for our economic security and insufficient as well, I would argue, for our happiness.

I am reminded that as long ago as 1864—158 years before ChatGPT made its debut—the Russian writer Fyodor Dostoevsky posited a similar connection between unpredictability and happiness. “Mathematical certainty is after all, something insufferable,” says the narrator of his novella Notes from Underground. “Twice two makes four seems to me simply a piece of insolence. If we are to give everything its due, twice two makes five is sometimes a very charming thing too.” The point is not to abandon the determinism of twice two makes four; the point is to embrace simultaneously—and strategically, in the face of the rise of LLM AI—the stochasticity of twice two makes five.

For our moment of reflection, I will ask you a question that I could pose to our very youngest Lower School students just as easily. It may sound like a trick question, but it is not. I ask only that you contemplate it a little while before settling on the answer in your mind. It is simple but perhaps not so simple to answer: What is your favorite color?

A machine can pretend to be original—can incorporate stochasticity to an extent that mimics originality—but a machine cannot be original. A machine can mimic artistry, but it is not an artist. It can mimic athleticism, but it is not athletic. It can mimic laughter, but it cannot laugh. It cannot feel joy. A machine cannot have a favorite color. You can and do have a favorite color. It is from this more ennobling understanding of human intelligence that the journey toward the reestablishment of our security can begin.

Always reason, always compassion, always courage. My best wishes to you and your loved ones for a joyful spring break.

Jay Rainey

Head of School

This week’s addition to the “Refrains for Rams” playlist: I Guess Time Just Makes Fools of Us All by Father John Misty (Apple Music / Spotify)